Data mining the internet

Two things totally fascinate me.

The first are secure certificates and the second is data analytics/data mining - the practice of collecting data about something and then using that data to extract more information from it.

Because of these two interests, a few months back I decided to see if I can extract information about a sites HTTPS cert and store it in a database, and, like a lot of projects in IT, some feature creep crept in and I ended up extending the data collection to IP address(es), DNS names, redirections and so on.

After letting the data collection scripts run for a while I posted a little about what I’d found in scanning the internet here [Examining Secure Certs across the internet]

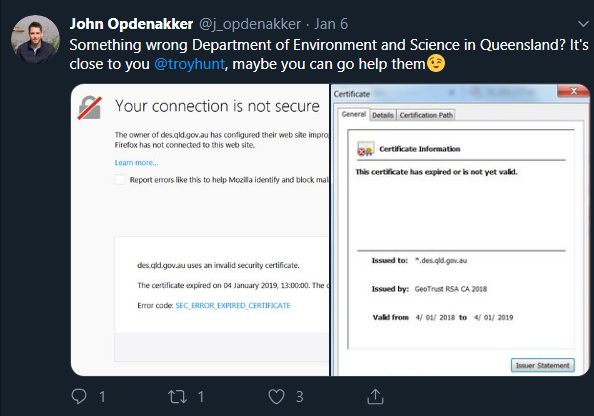

That blog garnered quite a good response on linkedin and provided a few suggestions around what else I could add to the data collection phase and what sort of data I could extract for the database. Over Christmas, I made a few tweaks and just left it scanning and last week, I happened to see this tweet by https://twitter.com/j_opdenakker

The cert he mentions refers to .des.qld.gov.au that is a government backed domain in Queensland, Australia.

I was curious if the certs database had any of these qld.gov.au domains in it and so I started digging through the database and I found 475 records.

A lot of the sites hadn’t been scanned in ages so the first thing to do was mark all those records then fire off a scan of those specific sites. The scan took longer than I expected and didn’t complete until the next day.

Once the full list had been rescanned the results proved to be quite interesting.

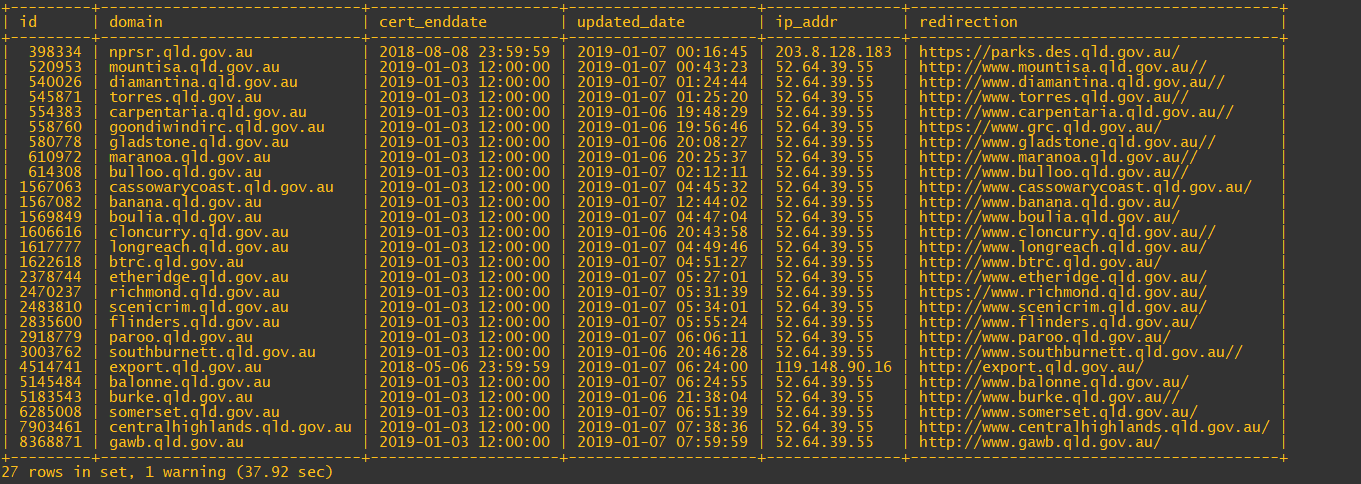

Of the 475 domains in the database, 27 are expired:

Depressingly, quite a few of those expired sites redirect to HTTP and not HTTPS.

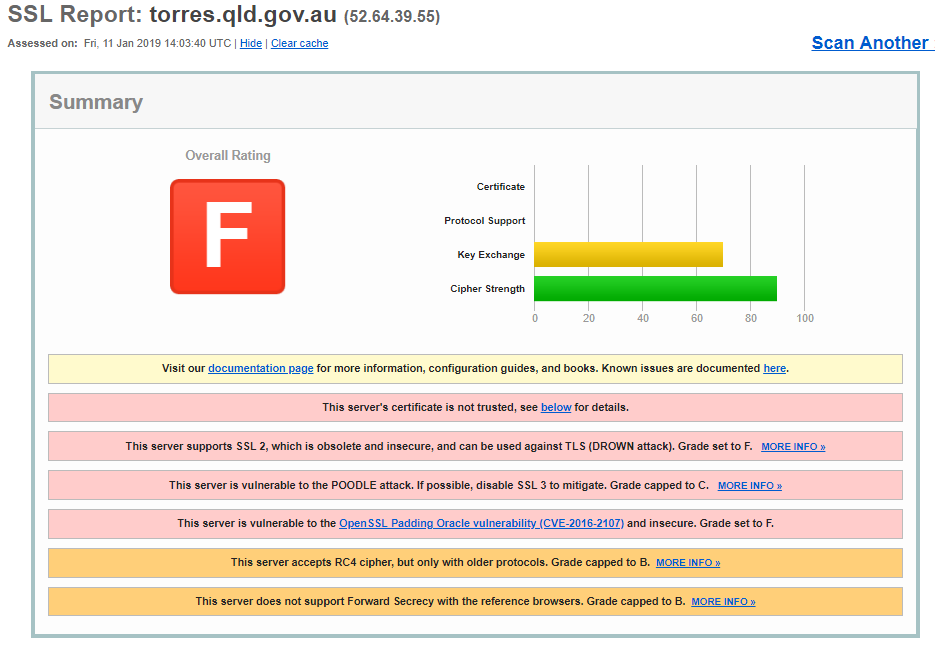

I was going to leave it there but Over Christmas I’d been tinkering with testing the sites for SSL 3.0, TLS 1.0 and so on. I felt sure that the SSL 3.0 test would be useless, after all, it’s been know for ages that SSL 3.0 is insecure so I thought I’d see how the script handled scanning those sites and what sites had SSL 3.0 enabled but when I saw a list of 25 sites that had SSL3 certificates enabled I thought that the script had a bug in it so a quick visit to one of them and a scan on qualys and………

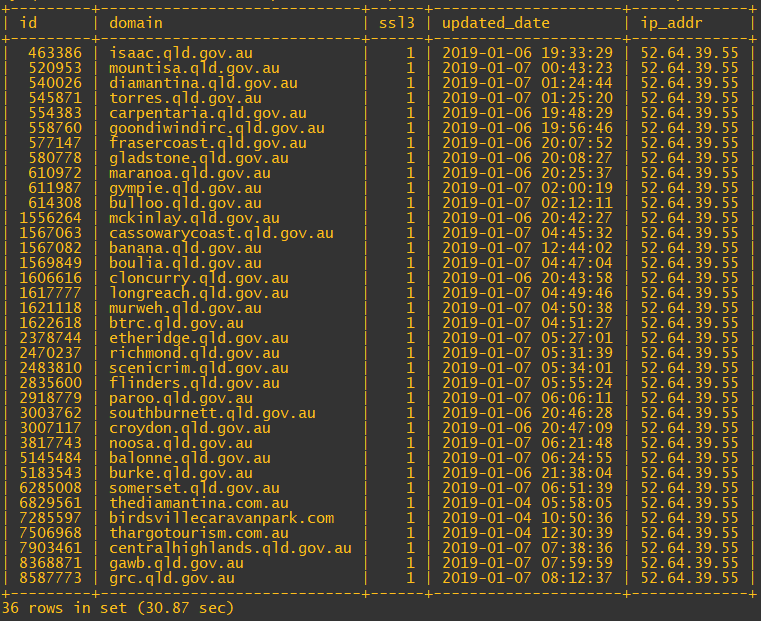

Wow. Not good, not good at all. Another thing that stood out about the list of expired of certs was the amount of times the IP 52.64.39.55 came up. I thought it would be interesting to pull out of the DB a list of sites that have that IP address allocated.

I thought it interesting that there appears to be at least one non gov.au site behind that ELB, presumably, the site is run by Queensland authorities and so part of a deployment done by the IT group that setup that ELB.

This has been an interesting little experiment and it does show just what is possible when a little bit of data mining and access to a few tools, now imagine what the bad guys can do.

Subscribe to Ramblings of a Sysadmin

Get the latest posts delivered right to your inbox